This Is How We Normalize AI

What you can do about it….

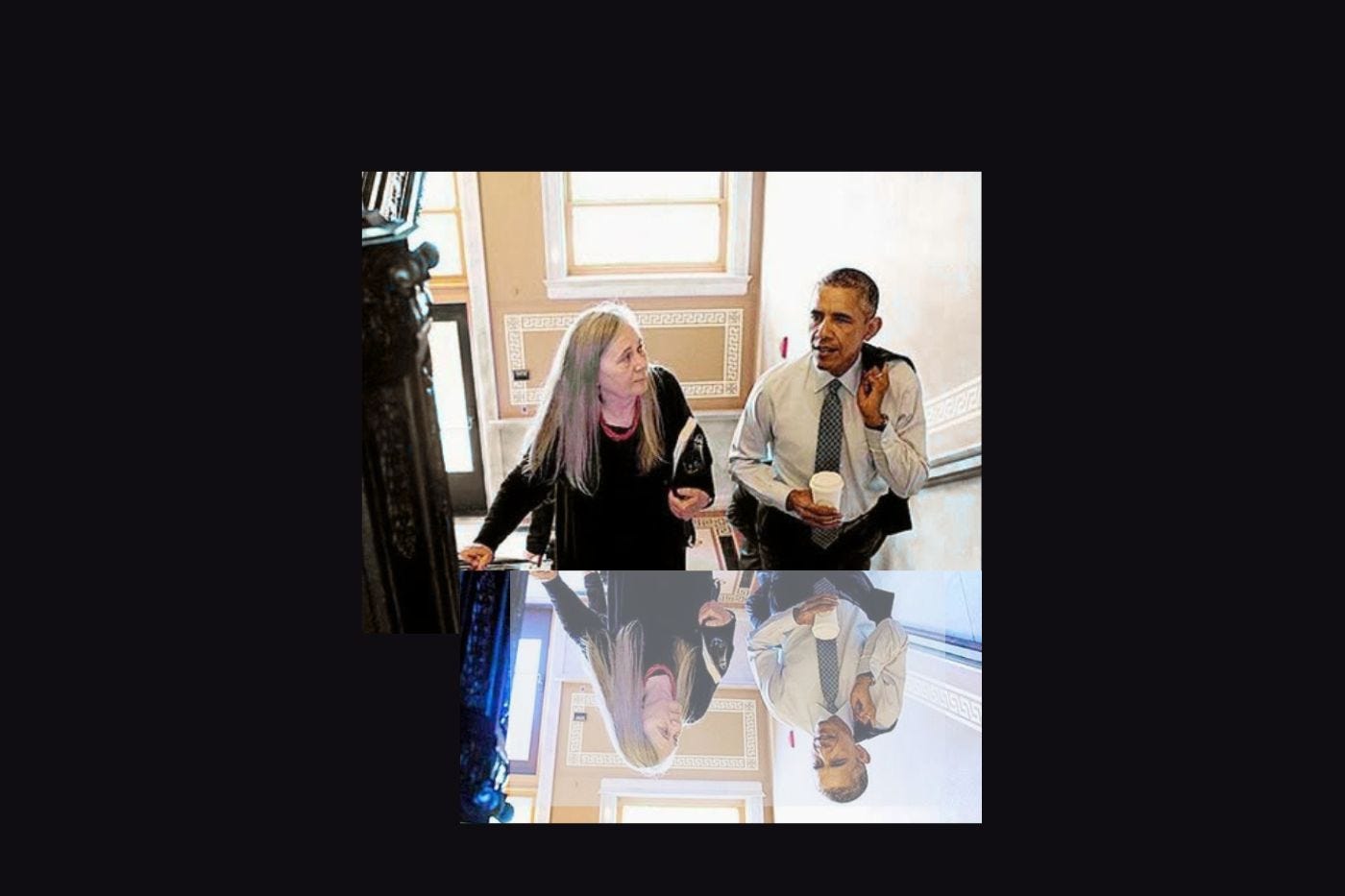

Yesterday I saw a photo on social media that caught my eye. It was from about ten years ago, with then-President Barack Obama and Marilynne Robinson, author of Gilead, to whom he awarded the National Humanities Medal. All good!

But then I saw the text accompanying the photo. AI of the most egregious kind.

Take this sentence for example, “[reading Gilead] changed him in ways that rippled through every decision he would ever make in the White House.”

Seriously? If a human ever wrote that, they would have an editor to hold them accountable. We hope.

So I did what I tend to do, which was leave a comment. It said, in effect, “Why use an AI version of something that has truth to it? There are alternatives, such as find a human-written account or write one yourself.”

The poster and others lashed back with “Can’t you just enjoy it and love it for the value it brings?”

In other words, who cares if it was written by AI?

Well, I care. And I have ever since AI asserted itself in its current form. More than a year ago, for example, an author I know reposted AI material on her socials, and I questioned it. In that case, I wasn’t sure she realized it was AI. Turned out she knew, and she was so offended that she blocked me. No exaggeration: She blocked me in outrage over my query about her AI post.

But back to AI Obama. I took the extra seconds to check where that post originated. Turns out it was from a FB page with entirely AI generated content, with similar types of stories featuring Jared Kushner, Princess Diana, and Bill Clinton, to name a few.

Then I looked at the page’s ownership history and found it listed as سكرة للخيوط والمستلزمات

Google translates that as “Yarn and Threads.” The owner has a shell LinkedIn account with links to Pakistan and Jordan. Of course, nothing nefarious about Arabic, but I have to ask: Why might this (non?)human be spending time putting out this content?

The more I think about all this, the more I wonder why we are choosing to normalize AI. Maybe it’s just easier to use AI, so we use it. Everybody’s doing it, so we do it too. It could be laziness, but I think it’s more than that. As we normalize AI, we lose our sensitivity to the difference. It becomes the new normal.

Take that sentence I quoted. Why wouldn’t someone reading it—especially a writer—stop and go WTF?! How can they see it as something to enjoy? Why don’t they bother to check the source?

These questions may sound rhetorical but they’re not. I really would like to know.

So here are some more questions. Because I think if we’re going to have any chance of swinging this tide, we’ll all need to consider these:

Do you ever post AI-generated text on social media? If so, what is your thought process?

When you see an AI-generated post, do you keep reading? Does it bother you at all?

When you see an AI-generated post, do you say anything in response?

This is not to say there are no uses for AI. I regularly use it for research. And I’ve used AI images for Substack illustrations. (Maybe one of these days I’ll write about AI and art….) But I draw the line at writing—which, whether it is in a book or a post, is my human expression and message.

Recently, I obtained a human-authored seal for the copyright page of my forthcoming novel, The Lie. I realize it is less than 100% meaningful, because nobody’s checking the book to see if it was really written by a human. But it’s the least I could do for a story that centers on the perils of AI.

Now I’m thinking books aren’t enough; perhaps I should put a human-authored sign on my social media as a sign of my commitment not to post AI-generated writing. I’ll have to figure out how to put it on there, but it’s not a bad idea. Join me?

I am so with you about AI. People are sleep walking into its trap. When I see an AI image, I fly past it. I do the same if I know the writing has been generated by AI. AI is not harmless. It's soul-killing.

Infuriating.